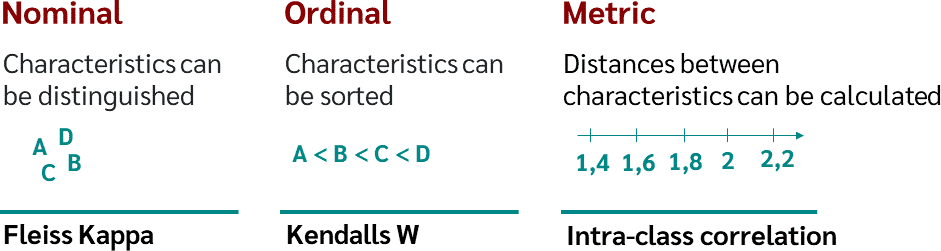

Fleiss Kappa levels to ascertain the level of agreement between raters | Download Scientific Diagram

Simulating and estimating agreement in the presence of multiple raters and covariates - McKenzie - 2023 - Statistics in Medicine - Wiley Online Library

Full article: Methods of assessing categorical agreement between correlated screening tests in clinical studies

PDF) Testing the Normal Approximation and Minimal Sample Size Requirements of Weighted Kappa When the Number of Categories is Large

Percentage agreement (Fleiss' Kappa) between three raters for each category | Download Scientific Diagram

PDF) Measuring Inter-observer Agreement in Contour Delineation of Medical Imaging in a Dummy Run Using Fleiss' Kappa

Fleiss Kappa statistic for three experts on 600 instances of the data set. | Download Scientific Diagram

Fleiss Kappa levels to ascertain the level of agreement between raters | Download Scientific Diagram

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/maxresdefault.jpg)